Over the years we have done SEO (search engine optimization) audits for our clients and also for our own projects. We have read all the articles and books related to SEO and SEO analysis. This research has led us to create a process to review websites, find their flaws and improve SEO.

Note that this post explains how to perform an SEO audit. If you want to perform an actual optimization there are many other factors to consider, and it’s recommended that you speak with an SEO consultant. If, on the other hand, you want to delve deeper into how to implement your social strategy I recommend checking out my guide on the role of a Social Media Manager.

If you want to rank high on search engines, especially in a competitive market, your website must be well optimized. What does that mean? It means letting Google know that your site is better than others and more relevant to the user’s search.

A good SEO consultant will guide you towards an efficient planning of an editorial plan for a good content marketing campaign, based on the search volumes found through tools like Google’s keyword planner or similar tools.

Table of Contents

Preparation for SEO Audits

Although there might be a craving to get your hands dirty right away and move on to the operational phase of the analysis as the first thing, it is necessary to prepare the ground for the next stages of the job.

Crawling

Crawling is a term used to refer to the process during which search engine spiders “visit” your site, analyzing its content in order to include it in their indexes.

The first step that we must perform during our analysis is to perform a crawl using a tool, whether free or paid. In this way we know what we are dealing with, as well as having an overview of any errors or gaps in the website.

Crawling Tools

While you can create your own crawling tool to get a detailed analysis that follows custom parameters, it may not be the easiest choice.

We always rely on Screaming Frog’s SEO Spider to perform the crawl.

This tool is distributed in a freemium manner, meaning that if your site has less than 500 pages you can use it for free, otherwise the cost is £149 per year.

If you only need to run this type of analysis just once for your website that has more than 500 pages a great solution is to do a search on fiverr and get a website scan done for a few dollars.

They will send you the scan in excel format that you can refer to whenever needed.

Alternatively, you can also use Xenu’s Link Sleuth. Keep in mind though that this tool was created to look for broken links (i.e. links on your website pointing to a page that doesn’t exist) and not for SEO audits and analysis purposes like Screaming Frog.

Although it shows you the title and meta description for each page it was not created for an in-depth analysis like the one we are dealing with.

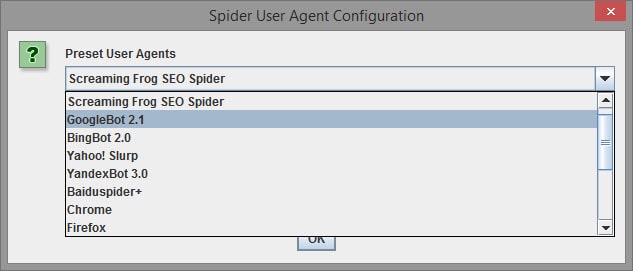

Crawl configuration

Once you’ve chosen the crawler you want to use you need to configure it to behave like the search engine we’re interested in. Let’s then go set up the user agent for the crawler as appropriate.

Googlebot - "Mozilla/5.0 (compatible; Googlebot/2.1; +https://www.google.com/bot.html)” Bingbot - "Mozilla/5.0 (compatible; bingbot/2.0; +https://www.bing.com/bingbot.htm)"

At this point, we need to set up the crawler by deciding its “intelligence level”.

There has always been a heated debate about the intelligence of crawlers.

In fact, no one knows for sure if they take cookies, JavaScript and CSS into account when crawling a site. Basically, we don’t know if they look more like a browser or a simple curl script.

The advice is to disable cookies, JavaScript and CSS when crawling. This way we can diagnose any problems detected by more basic crawlers. These considerations will also be applicable to more advanced crawlers.

WebMaster Tools

Although crawling can give us a lot of information, it is still necessary to directly look at the search engines.

Since we don’t have free access to their algorithms we’ll have to settle for the Webmaster Tools that they make available to us to continue with the setting up of our SEO audit.

Most search engines offer a panel to diagnose various problems, in our case we will focus on Google Webmaster Tools (also known as search console) and Bing Webmaster Tools.

Analytics

At this point we need to collect information directly from our visitors. The best way to do this is to use a tool like Google Analytics.

There are many alternatives to the free Google tool, for our purposes we can use any tool, as long as it allows us to track site visits and identify patterns.

We’re not done retrieving data for our analysis yet, but we’re well on our way. Let’s get started!

Accessibility

If your users or search engines can’t use your website… Well there’ s no need to add more. The first step of our SEO audit will be to make sure that your website and all of its pages are accessible.

Robots.txt

The file robots.txt file is used to restrict the search engine crawler’s access to certain pages or sections of your website. Although it is very useful, it can inadvertently block crawlers from visiting (or wanting to visit) our website.

Some search engines may ignore these provisions, but engines such as Google and Bing respect the provisions shown through this file.

For example if your file shows the following text you are blocking access to all your pages, which will not be indexed.

User-agent: * Disallow: /

In this first step you need to check your robots.txt file to make sure that the site is accessible, and that we are not cropping out important sections of our website. You can check via Webmaster Tool which pages are blocked to search engines.

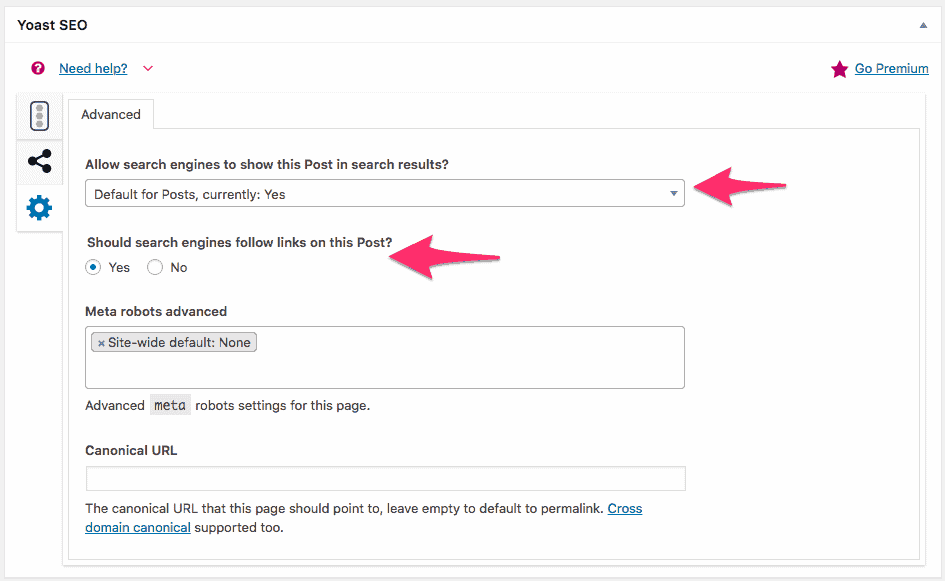

Robots Meta Tags

This meta tag is used to indicate to the crawler how to behave on that specific page. Using this tag we can tell it whether or not to index a page and whether or not to follow the links it contains.

As an example, if you use WordPress and the Yoast SEO plugin you have the ability to set this tag for each page.

While analyzing the accessibility of our website during a seo audit we want to make sure that no page we want to index is set to noindex.

For example a page that has this code inside the header will not be shown on search engines and links inside it will not be followed by the crawler:

<meta name="robots" content="noindex, nofollow" />

Deciding which pages to have indexed and which ones not to can be a more or less complex choice.

As a general rule we can say that all the pages of the site should be indexed except the legal pages (privacy policy, terms of service) that should be set as “noindex, follow”.

Obviously this is not always true and should be evaluated case by case according to the needs.

HTTP Status Codes

Search engines as well as users will not be able to visit your website if it reports http status codes, such as 4xx or 5xx errors.

During the crawl you should identify all pages that report an error, with particular attention to the soft 404 errors.

In case one of these pages is no longer available it will be necessary to set up a 301 redirect to a page with similar or relevant content.

Redirects

Speaking of redirects, it wouldn’t hurt to check all redirects to make sure that all of them are 301 HTTP redirects.

You need to avoid 302 redirects, JavaScript redirects and meta refresh redirects because these do not pass link juice to the landing page.

Sitemap XML

The XML sitemap is a map that search engines use to easily find all the content on your website. During an SEO audit, these questions need to be answered when we analyze the sitemap:

- Has your sitemap been reported through the webmaster tool? Search engines can find the sitemap themselves, but you should report it specifically.

- Is your sitemap an XML file with the right syntax? Does it follow the sitemap protocol to the letter? Search engines expect a specific format for a website sitemap, otherwise they may not process the sitemap correctly.

- Have you found crawled pages that are not in the sitemap? Make sure that your sitemap is always up to date

- Are there any pages listed in the sitemap that were not found through the crawl? If yes, let me introduce you to orphan pages, which are pages that do not have any links within your website. Find the appropriate place and insert a link to make sure we have at least one internal backlink.

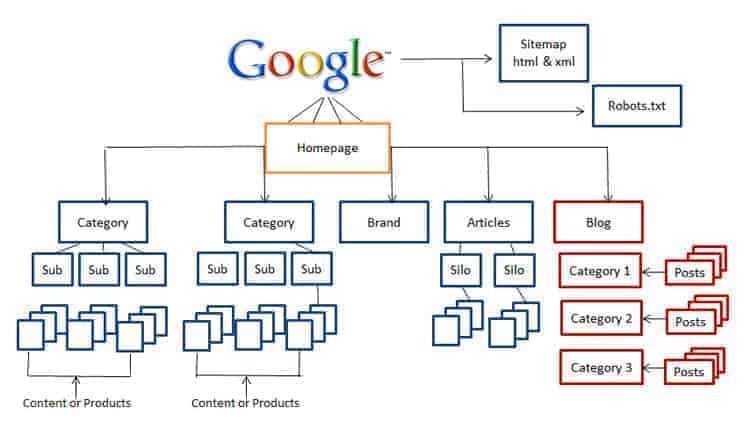

Website Architecture

The architecture of your website defines the structure of your website, i.e. its depth in vertical and its width in horizontal.

Evaluate how many clicks are required to get to your most important pages, looking for a balance between horizontal and vertical levels.

Flash and JavaScript elements

Some elements are inaccessible to crawlers. Although recently all menus, even the most complex ones, are created using only CSS, it is possible to see sites with menus created in flash or JavaScript.

Even though search engines have become smarter over the years the safest choice is still to avoid this kind of elements, especially in the case of menus. If the crawler is not able to “see” the menu it will not follow the links and will not understand the architecture of your website.

If you want to evaluate the “browsability” of your site you can run two scans: one with JavaScript enabled and one with JavaScript disabled. In this way simply by comparing the results you can identify the sections that are inaccessible without the use of JavaScript.

Website performance

It is well known that online users have a very low attention span, and if the page takes more than 2 seconds to load completely you can be sure that they will leave your site and never come back.

Likewise, Google rewards fast sites, since they offer a better user experience. And we know for sure that Google’s main focus is on user experience.

Moreover the time available to crawlers is limited, and the number of websites is increasing. It goes without saying that if in the same amount of time the crawler is able to index a larger number of pages it will perform a more thorough scan (thus scanning a larger number of pages).

You can check the speed of your website using various tools. We have written a guide on optimizing your website which covers the topic of speed and performance for WordPress in depth.

Indexability

In our SEO audits so far we have identified which pages are accessible to search engines. Now we need to define which pages are actually being indexed by search engines.

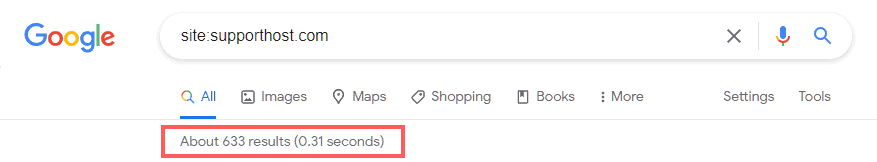

The “site:” command

Almost all search engines have the “site:” command that allows you to perform a search for the contents of a specific website. This type of search gives us an estimate of the number of pages indexed by the engine on which we perform the search.

For example if we search “site:supporthost.it” we see that there are 315 indexed pages:

This number of indexed pages is not accurate but it is still useful for us to have an estimate (we will call this value index count). You already know the number of pages on your site thanks to the crawl we did and the XML sitemap (which we’ll call count) and we’ll then be in one of these scenarios:

- Index count and counting via crawl and sitemap is more or less equivalent. This is the ideal scenario since the search engine has indexed all the pages of your website

- The index count is higher than the crawl count. In this case it is very likely that your site has duplicate content.

- Index count is lower than the count. This indicates that search engines are not indexing many of the pages on your website. You have probably already identified this in the previous step. If not, you should check whether your website has been penalized.

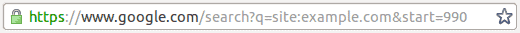

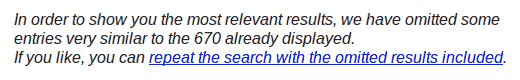

If you suspect that your website has duplicate content then search again with the command “site:” and add “&start=990” to the end of the url in the browser:

And check to see if Google shows you a message that there is duplicate content like this:

If you have a problem with duplicate content don’t despair, we will fix it in the next section of our SEO review.

Checking the quality of indexing

Let’s now go into more detail for our SEO audit. Let’s see if and how search engines index our most important pages.

Doing our search “site:” the pages should be shown in order of importance, starting obviously from the home page. If this is not the case there can be two types of problems:

- You need to revise the architecture of your website to give more importance to the pages that need to be higher up.

- It is possible that the page is not completely shown in the search results.

Page Searches

Perform a search for the specific page to see if it is included in the index or not:

If you can’t find the page in the search engine index check to see if it is accessible. If it is accessible make sure you have not received any penalties.

Brand Searches

After checking that your pages are indexed you need to check that your site is well ranked if you do a search with your brand.

Do a search for the name of your brand or business. Normally your site should appear at the top of the results. If your site is not really there it may have been penalized, let’s see how to investigate this further.

Search Engine Penalties

I hope you’ve made it this far in your website’s SEO audit without finding anything that would suggest a penalty. But if you believe your site has suffered a penalty here are four steps you can take to fix it.

1. Make sure you have been penalized

It often happens that what you believed to be a penalty was nothing more than an error (for example a noindex tag inserted inadvertently). So, before running for cover, let’s make sure that it is really a penalty.

In most cases, it will be an obvious penalty. You may have received a notification through Webmaster Tool. Or your page is not in the index despite being normally accessible.

It’s also important to note that your site may have simply lost traffic due to a Google algorithm update, updates that are becoming more frequent and sometimes catastrophic for some sites. Although this is not a true penalty it should be handled as such.

2. Identify the reason for the penalty

As soon as you are sure that you have received a penalty, you need to understand the reason for it. If you have received a notification through Webmaster Tools, it’s done.

If your site has been the victim of an algorithm update the situation will certainly be more complex. You will have to search the net for information about the update until you find the solution.

When such an update is applied to the algorithm there are always many sites that suffer the consequences and it won’t be difficult to understand the cause of the problem following a research on facebook groups, forums and blogs, both in Italian and English.

3. Fix the problem that led to the penalty

Once you have identified the reason for the penalty, fixing the cause of the problem will definitely be easy.

4. Request for reconsideration

Once you have fixed the problem you will have to request a reconsideration from the search engine that penalized you. Keep in mind that if your site was only a victim of an algorithm upgrade this kind of request will be completely useless and you’ll just have to wait.

For more information read Google’s guide to reconsiderations

SEO On Page

Now let’s move to another aspect of your website’s SEO. The features of your website that affect your website’s ranking.

URLs

When we perform an SEO analysis of a website by analyzing its URLs we need to ask ourselves a few questions:

- Is the URL short and readable? A good rule of thumb would be to keep URLs under 115 characters in length.

- Does the URL include relevant keywords? It is important to use a URL that describes its content.

- Is the URL using subfolders instead of subdomains? Subdomains are used as unique domains by search engines when it comes to passing link juice.

- Is the URL using dynamic parameters? Whenever possible, use only static URLs. If you can’t avoid using dynamic parameters make sure to register them in Google Webmaster Tool.

- Does the URL use the minus sign to separate words? The underscore sign was not appreciated by some search engines in the past. To sleep soundly, use the minus sign to separate words in the URL.

When analyzing URLs for an entire domain, we must ask ourselves the following questions

- Do most of the URLs follow the points listed above? Or are they mal-optimized?

- Based on the keywords covered by our site is the domain name appropriate? Does it contain the keywords? Does it look like spam?

Duplicate content with different URLs

During our SEO audits, in addition to checking the optimization of URLs, it is important to note if there is any duplicate content due to them.

URLs are almost always responsible for duplicate content. If two different URLs show the same content, the search engine believes that these are two different pages.

Comparing the index count with the count we mentioned before should give you an idea about the duplicate content on your website.

To be on the safe side you should check your site for the most common errors that lead to duplicate content due to URLs.

In the section on content analysis we will discuss other systems to identify duplicate content.

Content

It is often said that “content is king”. So let’s treat it the way it deserves to be treated.

To investigate the content of a page we have several tools at our disposal. The easiest to use is the Google cache that allows us to see a text-only version of our page.

Alternatively you can also use SEO Browser or Browseo. These two browsers show us a text-only version of our webpage and also include other interesting data about the page such as the page title and meta description among others.

Regardless of the tool you decide to use it will be necessary to find an answer to the following questions:

- Does the page contain enough content? There is no precise rule, but the content should contain at least 300 words.

- Does the content have a value for those who read it? This is obviously a subjective evaluation, but we can get some values by checking the bounce rate and the time spent on the page by checking the analytics.

- Does your content contain the keywords you want to rank for? Do these keywords appear in the first paragraph? If you want to rank for a keyword you need to make sure it is present in the content.

- Does your content look like spam? Have you overdone your keyword density, i.e., have you repeated your keyword too many times to the point of making it heavy reading?

- Are there typos or grammatical errors? If you don’t want to lose credibility, be sure to check your text for errors.

- Is the content easily readable? There are various metrics to estimate the readability of your content such as Flesch Reading Ease, Fog Index. Fog Index. However, these indices are designed for the English language and do not work as well with Italian.

- Are search engines able to check your content? If your content is in flash, or if it is text within an image, or if the JavaScript is too complex, search engines may not be able to see the content on your page.

While analyzing your content we will focus on 3 main areas:

1. Information architecture

The architecture of your website defines how information is presented on the site. During this phase you should make sure that each page of your website has a specific focus. You should also make sure that every keyword you are interested in is represented by a page on your website.

2. Cannibalization

Keyword Cannibalization is defined as when more than one page uses the same keyword to try to rank. When more than one page uses the same keyword it creates confusion for the search engine and creates confusion for users.

To identify cannibalization you can create a keyword index to map all the pages on your website. Once you have identified the pages that use the same keyword you can merge the pages or edit one of them to rank for a different keyword.

3. Duplicate content

Your site has duplicate content when it has multiple pages with the same or similar content. This type of problem can happen both between pages on the same site and between different sites.

A great tool for identifying duplicate content on your website is Siteliner

To identify duplicate content on pages from other sites you can use Copyscape

HTML Markup

The HTML code of your pages contains the most important elements for SEO, so you need to check that your code is valid for W3C standards.

W3C offers a service to validate your HTML code and a service to validate your CSS to help you check your website.

Title

The title is the most identifying part of the page. It’s the title that shows up in search engines, and the one you see at the top of your browser, to the thing people notice on social media. That’s why it’s important to check the titles of your website.

As you check the title you need to ask yourself the following questions:

- Is the title short enough? It is good practice to keep the title under 70 characters in length. A longer title will be cut off by search engines and makes it difficult for people to make comments on twitter

- Does the title describe the content of the page? Avoid using a clickbait title that doesn’t actually describe the content if you don’t want to see your bounce rate skyrocket.

- Does the title contain the keyword you want to rank for? It is a good idea to use the keyword you want to rank for in the title of your page, and use it at the beginning of the title. This is the most important on-page SEO optimization.

- Is your title over-optimized? Try to write the title for your users, not for the search engines, and above all avoid keyword stuffing.

Make sure each page has a unique title, you can use crawl to perform this analysis. Alternatively you can use Google Webmaster Tools to get this information (check Optimization -> HTML Improvements).

Meta Description

The meta description is no longer used by search engines as a ranking factor, but it influences indirectly since it affects the CTR (the percentage of people who click on your result after seeing it).

The questions to ask yourself regarding the meta description are the same as the title indicated above. You need to keep the length under 155 characters and avoid over-optimizing the text.

As with the title make sure each page has a unique meta description, you can use crawl to perform this analysis. Alternatively you can use Google Webmaster Tools to get this information (check Optimization -> HTML Improvements).

Other Tags <head>

The title and description tags are undoubtedly the most important from an SEO point of view within the section. Let’s do a further check:

- Do any of your pages use the meta keyword field? Over time, this field has been associated with spam, so to be on the safe side, avoid using it completely.

- Are you using rel=”canonical” link in any of your pages? If you are using it make sure you are using it correctly to avoid the problem of duplicate content.

- Do you have content split across multiple pages? Are you using rel=”prev” and rel=”next” correctly? Adding these parameters to links helps search engines identify pagination on your website.

Images

A picture is worth 1000 words, but not to search engines. That’s why we need to use metadata in order to give more information to search engines.

When analyzing an image from an SEO point of view the most important part is the file name and the alt text. Both texts should describe the image and where possible contain the keyword you want to rank for. If you want to learn more about this topic check out our article on how to optimize images for SEO where I also explain how to automatically pick up the attributes of the images you upload to WordPress.

Outbound links

When one page links to another the page receiving the link is like receiving a vote. That’s why it’s important to make sure the outbound links are quality.

In fact if you are giving a grade to a low quality site or worse spam you probably have a low quality too.

To evaluate the links during SEO audits it is worth asking yourself the following questions:

- Do the links point to a reliable site? As mentioned above if you send links from your site to a spam site Google may come to the conclusion that your site is a spam site.

- Are the links relevant to the content of the page? The links on your page should point to useful resources or relevant content. If you add a link to a page that has no relevance to the topic of your page it will worsen the user experience and reduce the relevance of your page to the topic in the eyes of Google.

- Do the links on the page use relevant anchor text? Does the anchor text use relevant keywords? The anchor text of a link should describe the text of the page the link points to. This helps users to understand if they want to click on the link and helps the search engine to understand what the landing page is about.

- Are any of the links broken? If you click on the link and receive a 4xx or 5xx error, the link is considered broken. You can find broken links by crawling or by using a Link Checker

- Do your links use unnecessary redirects? If your internal links are performing a redirect you are diluting the link juice going from one page to another on your website. Make sure your internal links are pointing to the correct pages.

- Do you have nofollow links? If you can control your links (and so they are not links added to your site by your users) you should let the link juice flow without blockages.

When you control the links on your website you should control the distribution of your links so that the most important pages are the ones that receive the most links.

Be careful not to create a situation identifiable as rank sculpting but at the same time you should give more importance to the main pages of your website.

Other Tags <Body>

Apart from images and links there are other important elements that we can find in the <html> section of your web page during SEO review. Here are the questions we need to ask ourselves during the analysis:

- Does the page contain a h1 title? Does this contain one of the keywords you want to rank for? Titles are as important as the title tag but they are still relevant and should contain the keyword you want to rank for. Read how to use header tags

- Does the page use frames or iframes? If you are using a frame to display content then search engines will not associate that content with your page.

- Relationship between content and advertising. Make sure your content is not overwhelmed with ads.

SEO Off Page

On-page SEO parameters are undoubtedly an important part of our analysis, but it is necessary to perform a thorough check of off-page SEO

Popularity

Although the most popular sites are not the most useful we can not ignore this parameter, which predicts the success of a website. When evaluating the popularity of a website we have to answer some questions:

- Is your site growing? Simply by checking your analytics you can see if you are gaining or losing traffic compared to previous months/weeks.

- What is your popularity compared to other similar sites? You can use third party services such as Alexa, Quantcast or SimilarWeb to assess the situation.

- Do you get backlinks from popular sites? You can use a tool to check the popularity of your site using a link-based metric like mozRank to evaluate your popularity and that of the sites linking to you.

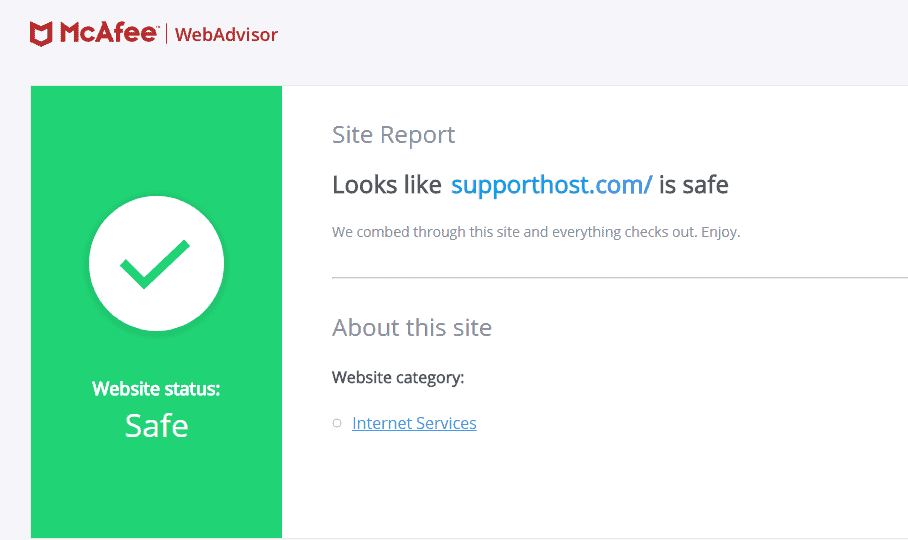

Reliability

The reliability of a website is a very subjective parameter since everyone has his own interpretation and concept for this term. In order to avoid personal prejudices it is necessary to use parameters that are globally considered as unreliable.

Untrustworthy behaviors can be divided into various categories, but in the case of our SEO audit we will focus on malware and spam. You can rely on blacklists such as DNS-BH or API di Google to monitor your site. You may want to use a tool like SiteAdvisor.

To check if your site could be considered spam by Google you should check the following points:

- Keyword Stuffing – it involves creating content with too high keyword density

- Testo nascosto o invisibile – using differences in browser and spider technologies to hide text from users and show it to spiders. It is usually done by showing text with the same color as the background.

- Cloaking – showing different versions of the same page to users and search engines, usually done by exploiting IPs or user agent

Even if your site is trustworthy, it is necessary to check the reliability of your neighbors, i.e. sites that link to your site and are linked from your site.

Backlink Profile

The quality of your site is defined by the quality of the sites that link to it. For this reason it is very important to analyze your backlink profile and see how you can improve it.

There are a number of tools available for this purpose including Webmaster Tools, Open Site Explorer, Majestic SEO and Ahrefs.

Here is what to check for regarding your backlinks:

- How many unique domains link to your website? The more links the better, but having 100 links from 100 different domains is much more valuable than having 100 links from the same domain.

- What percentage of backlinks are nofollow? Ideally most backlinks will be dofollow, but keep in mind that a site that has nofollow backlinks is suspect.

- Does the distribution of anchor text appear to be natural? If too many backlinks use “exact match anchor text” search engines will suspect that those backlinks are not natural.

- Are the backlinks coming to you from themed sites? If the links to your site are on sites on the same topic this helps your site be seen as authoritative for that topic.

- How popular, trustworthy and what authority do the sites linking to you have? If you are linked to by too many low quality sites you will also be considered low quality.

Authority

The authority of a website is defined by a combination of factors such as the quality and quantity of backlinks, its popularity and its reliability.

To estimate the authority of your website SEOmoz provides us with two very important metrics: Page Authority and Domain Authority. Page Authority helps us understand how high the page will get on search engines while Domain Authority refers to the entire website.

These two metrics aggregate different link metrics (mozRank and moztrust among others) to give you a simple parameter to compare the strength of a page or domain.

Competitive Analysis

Now that you’ve finished the SEO audit of your website, it’s time to start the same analysis on your competitors. It’s a long job, but a thorough knowledge of your competitors will allow you to understand what they’re doing more than you, and what their weaknesses are, so you can beat them in the long run.

Seo Report

Once you have finished the SEO audit of your site and that of your competitors you should put all your observations into a report that anyone can follow to take the necessary steps to improve the analyzed website.

If necessary, include some additional information so that the report is understandable to a manager or executive who is not necessarily a technician.

Prioritize so that the most important actions that will have the greatest impact on the website’s ranking are taken first, and leave the less important things and finishing touches to the end.

Try to be specific and make recommendations that can be used as guidelines for future tasks. Pointing out something like “write better headlines” makes little sense as it is generic.

Glossary

Anchor Text: the clickable text in a link, the one that is displayed by the user as underlined and that gives an idea of the type of content we will find on the landing page.

Backlink: A link that from an external site points back to our website

Keyword: literally “keyword” for which you want to position your site on Google. Usually consists of more than one word, also called keyphrase

Keyword Density: the percentage of keywords in the entire text. Usually we try to keep this value between 1-2%.

Keyword Stuffing: the use of a keyword an excessive number of times to the point of making the text not very fluent. This practice is penalized by search engines

Link Juice: Indicates the sum of the three values passed through links from one document to another: thematization, pagerank, authority.

Malware: applications that present a harassing, undesirable or hidden behavior

Rank Sculpting: practice aimed at giving more importance to specific pages by arranging internal links in order to give a greater number of links to pages of our site that we want to appear higher than the other pages of the website

SERP: Search Engine Results Page or the search engine results page. It is necessary to have quality content on our website if we want to improve its positioning to reach the first page.

Spam: massive sending of unwanted emails. A spam site is a site without a value for the user to give links to certain sites to make them rise in the SERPs.

User Agent: In computer science, the user agent is an application installed on the user’s computer that connects to a server process. Examples of user agents are web browsers, media players, crawlers and mail client programs.

Ready to build your WordPress site?

Try our service free for 14 days. No obligation, no credit card required.